As I’ve mentioned many times on this blog, Critical Thinking is a skill that does not exist in isolation. Critical Thinking piggy backs on communication skills like reading, writing, speaking, listening, viewing and representing. Therefore, it is important to note that if students struggle in one of these skills, it might hinder their ability to show us their critical thinking. For example, if we only ever assess Critical Thinking through written products, then students that struggle in this area are always at a disadvantage, despite perhaps being very proficient critical thinkers. When assessing Critical Thinking it is important to diversifying the medium (reading, writing, speaking, listening, viewing and representing) the mode (observation, conversation, product). As such, early in the school year we may assess Critical Thinking via a student observation and follow up conference, while later on we may assess it via written or creative products.

In this post, I’ll be sharing with you a variety of tools for assessing Critical Thinking as it relates to the three dimensions we’ve been focusing on: connecting ideas, problem solving, and inferring. Like everything about teaching, these tools are a work in progress, and aren’t perfect. Please feel free to adapt them as you see fit to your grade or curricular context.

Connecting Ideas

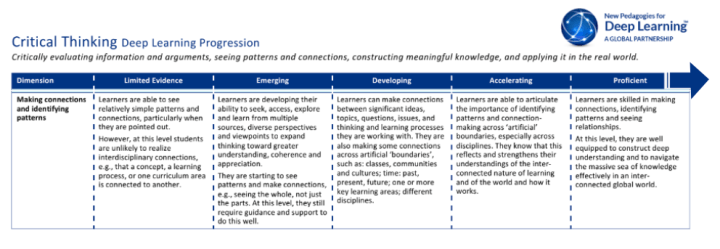

New Pedagogies for Deep Learning has created very detailed progressions for all of the 6C’s. That being said, they are progressions, not rubrics. They aren’t anchored in a particular grade, curriculum, or learning task. Instead they focus on generalized indicators or behaviours a teacher might observe in their students, over time, while engaged in deep learning experiences.

I like to use the language of the progression as inspiration to built assessment tools that cater to specific grade and curricular context. For instance, perhaps you are an Middle School teacher looking to assess how well your students are able to make connections in ELA and Social Studies on the topic of Human Rights, here’s the language of the progression I’d borrow to “cut and paste” a more stream lined assessment tool:

I like to use the language of the progression as inspiration to built assessment tools that cater to specific grade and curricular context. For instance, perhaps you are an Middle School teacher looking to assess how well your students are able to make connections in ELA and Social Studies on the topic of Human Rights, here’s the language of the progression I’d borrow to “cut and paste” a more stream lined assessment tool:

A student showing limited evidence of connecting ideas might be able state that in Social Studies they are learning about Human Rights, and in ELA they are reading a novel about a Residential School, however they can’t articulate how the two are related without leading or prompting. An emerging student might be able to connect state Human Rights and residential schools are connected, but couldn’t provide specific evidence of how or why. A developing or accelerating learner might be able to use details in the novel to discuss how human rights were abused in residential schools. And a proficient learner might be able to build on that understanding and discuss how it all connects to reconciliation.

A student showing limited evidence of connecting ideas might be able state that in Social Studies they are learning about Human Rights, and in ELA they are reading a novel about a Residential School, however they can’t articulate how the two are related without leading or prompting. An emerging student might be able to connect state Human Rights and residential schools are connected, but couldn’t provide specific evidence of how or why. A developing or accelerating learner might be able to use details in the novel to discuss how human rights were abused in residential schools. And a proficient learner might be able to build on that understanding and discuss how it all connects to reconciliation.

As you can see from this general example, a progression can help us assess a learners ability to Critically Think- Connect Ideas, over time. It’s not anchored in one specific learning task, but rather in a series of rich learning experiences occurring over time.

Problem Solving

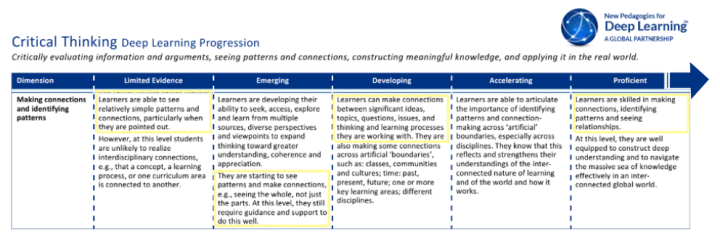

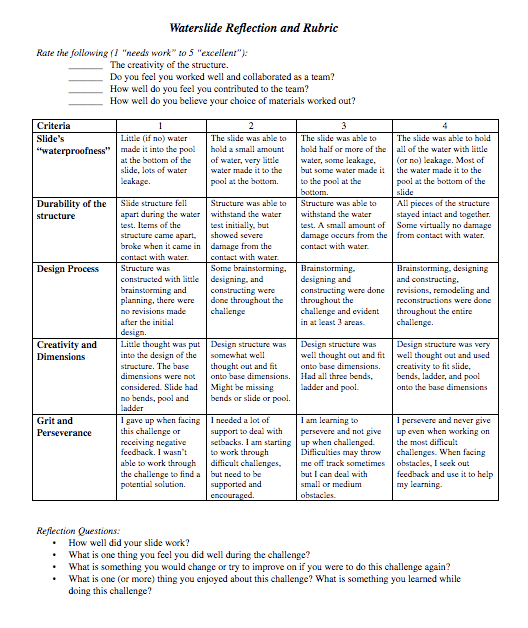

Often it’s easiest to assess problem solving when it is embedded within an authentic and purposeful task. I was recently in a grade 3/4 classroom, supporting cross-curricular Deep Learning. As part of the ELA/Social Studies content, we were examining a child’s right to education and play. As shown in my previous posts, we explored James Mollison’s photography of playgrounds around the world (and a few other great texts I’ll share in a future post 😉 ). After considering the purpose of playgrounds, the students in the class really wanted to design their own playground blueprints which then led to written descriptions rich in sensory language (again, deets in an upcoming post). To connect to Math (budgeting and money) and Science (structures) outcomes, the students were given a playground related design challenge (as seen below)

This design challenge was full of problem solving. Judging criteria was clear, yet provided students with plenty of space to be creative. Everything from budgeting supplies to sketching and building a design had students flexing their problems solving muscles, in real time. After the students had built and tested their designs (with water) we had class discussion/debrief and students completed a personal process reflection  While the reflection doesn’t have a specific category for problem solving, the language and ideology of problem solving is evident throughout. The classroom teacher and I circulated constantly through out the entire process. We collected notes from quick design conferences with students, captured photographs and videos of the build process, and had students submit personal reflections at the end (some written, some oral). By the end of the whole thing, we had more than enough triangulated data (observations, conversations, products) to assess the student’s abilities to problem solve.

While the reflection doesn’t have a specific category for problem solving, the language and ideology of problem solving is evident throughout. The classroom teacher and I circulated constantly through out the entire process. We collected notes from quick design conferences with students, captured photographs and videos of the build process, and had students submit personal reflections at the end (some written, some oral). By the end of the whole thing, we had more than enough triangulated data (observations, conversations, products) to assess the student’s abilities to problem solve.

Inferencing

I often hear the question “but what does Critical Thinking look like in grade –“, and I usually respond with “it depends”. Not a very satisfactory answer, is it? But the reality is, it does depend. Consider this in the context of Critical Thinking- Inferencing. Here’s some questions that I usually ask of teachers;

- What type of text was used for the inferring? Picture book, Novel, Photograph, Animated short?

- Who picked it? Teacher, student, other?

- How complex is the text?

- How many times have students interacted with this genre of text, and this text specifically?

- How much front loading was done around the text, topic, theme?

- How did students show their understanding? Conversation? Written Product?

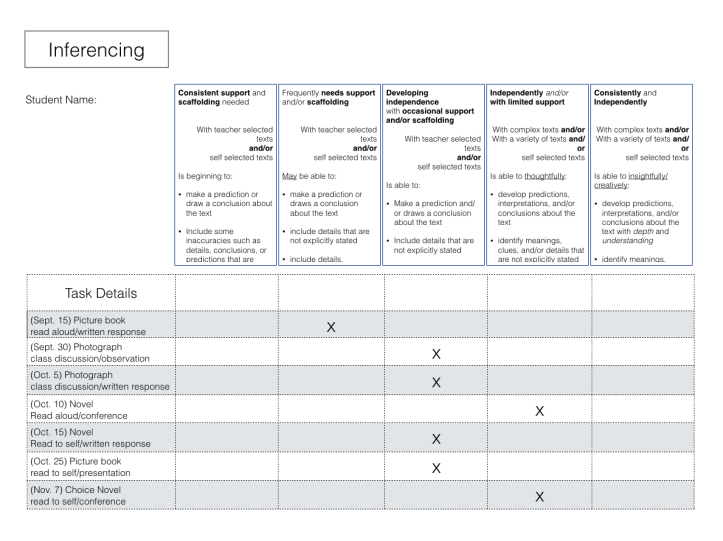

Inferencing is a dimension of Critical Thinking that lends itself to practice in many forms. Once students consistently and independently demonstrate inferencing with one type of text, it is possible to increase the complexity of the text, or change up the genre of text all together. With this in mind, I prefer an assessment tool that is flexible and lends itself to the collection of evidence over time. This way we can start to see patterns in student learning. See example below: A quick look at this example gives us a lot of information about the student’s ability to think critically and show inferencing. Generally speaking they are at “developing independence” level as they still need occasional scaffolding. However they tend to show more thoughtfully developed responses in conferencing situations. They are not yet working independently when inferencing, but I suspect that by December, they should be. Important to note that the evidence of student learning collected on this chart is a mixture of text types (picture book, photograph, novel) text delivery (read aloud, read to self) and products (written response, class discussion, conferencing). While the above example is focused on the criteria for inferencing, if would be quite possible to change the language to reflect any aspect of Critical Thinking, including the Connecting Ideas progressions discussed above. Click here for a downloadable template of this collection tool.

A quick look at this example gives us a lot of information about the student’s ability to think critically and show inferencing. Generally speaking they are at “developing independence” level as they still need occasional scaffolding. However they tend to show more thoughtfully developed responses in conferencing situations. They are not yet working independently when inferencing, but I suspect that by December, they should be. Important to note that the evidence of student learning collected on this chart is a mixture of text types (picture book, photograph, novel) text delivery (read aloud, read to self) and products (written response, class discussion, conferencing). While the above example is focused on the criteria for inferencing, if would be quite possible to change the language to reflect any aspect of Critical Thinking, including the Connecting Ideas progressions discussed above. Click here for a downloadable template of this collection tool.

In Conclusion

I’m 1000 words into this post, and I haven’t even scratched the surface of the many ways we could assess Critical Thinking. The tools I’ve shared in the post are imperfect and in development, but hopefully they’ve begun to clarify how you might assess Critical Thinking in your classroom. I’d love to hear your thoughts -please leave the in the comments below!